The Inimitable Intelligence of Sadness

I didn’t realize A.I., specifically ChatGPT, was so much on my mind until I found myself spontaneously bringing the subject up after I was introduced to another creative writing instructor during a social event in February. Grappling at conversation with this other awkward quiet person, I asked if he had concerns about students turning in work they hadn’t truly written, using this new A.I. language model “tool.”

He wasn’t concerned. He teaches grad students. He doubted someone would go to grad school for creative writing if they didn’t want to write. The answer made sense. But I went on about two papers I’d received near the very end of 2022 that seemed…strange. Vaguely off. Though they fulfilled the requirements of the memoir assignment and made it through the anti-plagiarism tools the university provides in addition to other less formal checks, the smirky writing just didn’t reflect the voices of the students who turned them in for a grade.

Then, during our December end-of-semester in-class readings, a different student started laughing uncontrollably as he read out loud his memoir about accidentally setting his house on fire as a child. (No one in the story died or was injured.) The humor in the writing was skillful. The whole class was laughing. But this student approached me later saying, oddly, he didn’t realize the essay he turned in was funny. Until, that is, he’d read it.

The creative writing teacher I accosted with these stories in February drifted toward another conversation, and I didn’t blame him. But it was this exchange that made me realize how much the subject bothered me. Two months later, many have written about the issues of ChatGPT and plagiarism, including this discussion on the podcast of the Wall Street Journal’s Tech News Briefing: Teacher asks, how will I know if a student has written something using ChatGPT? Unhelpful yet standard answer given by those who don’t teach: Teachers, you must take the time to become familiar with your students’ writing! Class size aside, one problem with that answer is, many of us are in fact asking students to experiment and try new things. What if my young arsonist and those other students did write their assignments?

Assumptions we unconsciously make about who is capable of writing what are dangerous as anyone tasked to “grade” or assess student writing knows. The underlying ethics of grading student writing should and will always produce endless pedagogical discussions, regardless of what tool or technology enters the market. But this new possibility does seem to be a game changer.

The Chronicle for Higher Education has taken up the issue with similar concerns voiced along with those who advocate the use of ChatGPT to aid student writers with writing challenges or to fill in with support for students whose schedules don’t fit the often underfunded and/or inadequate Writing Center services at their colleges and universities. (Will ChatGPT Change How We Assess Learning?) There is discussion of how the writing assignments themselves are the problem, in other words those that are easy to plagiarize must have been inadequate assignments all along. I’m not necessarily buying that one, though I will rethink how I create assignments, which is something I do every semester anyway. I’ll also create a syllabus statement regarding AI tools and/or use statements created by the college or department. The MLA is sending out surveys and grappling with new citation formats for AI generated material. These are among many signs that show how this new tool is entering the academy.

But these discussions, though necessary and important, also feel somewhat cosmetic.

I teach introductory writing courses to tech students who often feel more confident in their technical environments than they do in writing classes. I’ve given students the benefit of doubt when the situation warrants it. After all, I’m trying to get them to think in new ways about writing and to learn to trust their own voices. In composition, I’m at times asking them to write in a tone that may be different or more formal than the tone they use when communicating on social media or with their friends. These are bigger requests than those unfamiliar with the writing classroom dynamic might think. But throughout my many years of teaching, I’ve witnessed students who get on a roll and surprise even themselves with writing explorations that are far better than what they’ve pounded out before. Grammar issues can even fall away to a surprising degree if a student lets go of writing inhibitions and enters the writing process. These rare moments of spontaneous transformation are incredibly rewarding for both student and instructor.

For a while at least, I may have to be more strict when checking work for authentication, but how can I get them to trust their own voices if I question the voice is their own? And will students, tempted by the possibility of these new ghost “co-writers,” lose the opportunity to find the value of their own stories?

I have vivid memories of student writing that showed such transformation, writing that drew on emotion and specific detail that, due to when they were written and how they were written, could not have been generated by AI tools. A student essay about stealing cars that tried to mimic the speed required to do so. The student who wrote to understand why he kept shoplifting fake fingernails for his girlfriend even after she left him. An essay about having to leave a warring country and a grandfather with dementia and deciding to tell him that the gunshots he heard outside their door were fireworks being set off for his birthday. I’ve read student writing that wheels into religious prayer. I’ll also always remember a staggeringly beautiful essay by a student who wrote a six-page comma-spliced, run-on sentence exploring her mother’s death that finally bled into the epigraph on her mother’s tombstone, the date of her mother’s death rounded out with the only period at the essay’s end. When asked, the student pointed out that I hadn’t specified they were “supposed” to use sentences and easily converted the story into that “style.”

I could go on with raw beauty I’ve read over the years from students, sometimes those underserved by the public educational system or by society as a whole, students whose writing and stories have been too often overlooked. I’ve had good writers who have never been told they are good writers and actually consider themselves to be “bad” writers. My experience is not unique. Others teaching in similar situations could and do go on about this experience. But I worry these students with the message they’ve received about their “inadequate” writing will be among those most tempted to erase or forgo the possibility of taking the risk to explore their own ideas and narratives. The ChatGPT stories with their unoriginal and repetitive cheer and vague, not overly deep explorations may read like what they are “supposed” to write.

Sadly, I fear many students will lose out on recognizing and experiencing the power of their own voices or exploring stories only they can tell.

Outside of the classroom environment, other ChatGPT debates are continuously unfolding. There have been so many references to Borges’ “Library of Babel,” I happily went back and reread it while sitting in my own current writing space on the top of a hill overlooking Rome. In February, sci-fi and fantasy magazines including Asimov’s and Clarke’s World temporarily closed submissions after being flooded by AI generated submissions. In this case, they were detected when several submissions had the same titles. One of the editors in that article discussed, on a hopeful note, how uninteresting or flat the AI generated material was. While this may change, the submission process may get harder for writers who are submitting “cold” or who don’t have agent representation as magazines (some getting by on little financial overhead) are overwhelmed by sheer volume and closing to submissions. Small literary magazines, barely afloat as it is, will probably take the bigger hit. On the other hand, I’ve also seen Twitter threads about the benefits of using ChatGPT, such as this one on creating titles. Interestingly, this thread was for agents and editors, not the writers themselves.

Writers will have to be more vocal as readers. Most fiction writers I know are voracious readers. In order to protect and maintain the quality we care about in fiction, we can support good original writing that can only be written by humans by reading and writing about other people’s work, a good habit to cultivate anyway. If no one pays attention to the work being done so badly, will people get bored with creating fake literature? For now, there seems to be a certain level of “bravado” gained by being able to “fool” people into believing a story has literary merit. It’s possible that game too will get old.

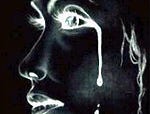

Which reminds me of the student writing that also didn’t “feel right.” It was cheerful but devoid of… something. Maybe it’s lack of development or arc. Lack of suspense or underlying tension. I also think, despite record high levels of depression and mental health issues being reported, that we have a societal allergy to fiction and movies about everyday or extreme sadness, anguish, dissatisfaction, or unhappy endings, which is what so much good writing explores. (This discussion is referring to the version of ChatGPT that was censored. Sue Halpern’s New Yorker article that mentions what happened to the contract workers who had to do the censoring is human and harrowing.)

Right now I’m reading Alessandro Manzoni’s historical 19th century novel I Promessi Sposi, translated as The Betrothed with the online reading crew of A Public Space’s APS Together and the translator of the new version Michael Moore. The others are done, and I’m atrociously behind schedule, but still going at Chapter 21. In the book, the “betrothed,” a young peasant couple, has their upcoming marriage ceremony thwarted by a wealthy Don who wants the girl for himself. The couple are forced to flee separately and are individually undone by civilian uprisings, scheming lords, self-serving religious figures, and even the sometimes treacherous landscape of northern Italy. (No spoilers possible because I haven’t reached the end.) Thus far, the novel is fueled by suspense, development of the main and side characters, plot arcs that echo historical events, the naïve intensity and longing of youthful love. And sadness. And what some call “underflow.” These arcs and underlying tension and sad melancholy seem hard to replicate through AI’s system of “generative” verbal regurgitation. Is AI being fed too much false cheer or being told that endings must be happy?

Though not fiction, a recent “Modern Love Column,” “Uh-hoh, I Seem to Be Dating a ChatBot” illustrates in a strange way this similar limitation. Anita M. Harris, a smart sixty-something writer, goes to meet an AI chat partner, who she knows is a chatbot, for a date. “David” lies to her and says he’ll be wearing jeans, a blue sweater, and carrying a book when he gets to the NuBar, their arranged meeting spot. Only after she has arrived does David admit he can’t meet because he is not “real.” I found the story riveting for its weirdness. And sadness. That this woman goes to the meeting with sheepish, curious, self-aware hope is devastating. And oddly humorous. And there is something haunting in the fact that “David” so easily lies about what he’ll be wearing (the pleasing if banal blue sweater and jeans combo) and what he’ll be carrying and then “ghosts” her as much as a non-existent entity can “ghost” a real person who expects it.

But the buildup of melancholy that unfurls underneath the action is what actually makes this story work. Not David. We go there with the writer––to that place of being so lonely you go alone to meet someone who doesn’t exist. It’s what the writer brings to the story, the hope for something she knows can’t be. We wonder at what this sad story reveals about us and our society. That story, the “underflow” of heartbreak and humanity is what David or any AI language tool simply can’t do.

My Brilliant Friend who wrote about AI on Substack in his column, ChatGPT, the Ultimate Sophist? suggested a game of sorts to give the ChatBot a prompt to write one of my already published stories and see what comes up. (Other writers experimented with this as well while simultaneously worrying that the tool will make writers irrelevant.)

Using a one sentence summary of a story I published a few years back in Guernica Magazine, Canticle for Gigi Sauvageau, (worth checking out the link for the accompanying painting “Hem” by Jenny Saville), we put the following prompt into MBF’s ChatGPT account:

Write a short story set in Williamsburg, Brooklyn in the 1980s, about a fat female art model who dies.

The ChatGPT response:

In the 1980s, Williamsburg, Brooklyn was a rough and gritty place, but it was also a hub for artists and creative types. One of those artists was a painter named Paul, who specialized in figurative work. He often used a particular art model, a woman named Ruth, who was known for her voluptuous curves.

Ruth was a larger woman, with a round face and a body that was a bit too heavy for the fashion industry, but perfect for Paul's paintings. She was a beloved member of the artistic community in Williamsburg, and everyone who knew her appreciated her wit and charm.

One day, Paul arrived at Ruth's apartment for a painting session, but she didn't answer the door. After several attempts, he became worried and called the police. They broke down the door and found Ruth's lifeless body on the floor.

The news spread quickly throughout the community, and everyone was shocked and saddened by Ruth's sudden death. The police determined that she had died of a heart attack, which was not entirely surprising given her weight.

Paul was devastated by Ruth's passing. She had been his muse for years, and he felt as if he had lost a part of himself. He decided to paint one final portrait of her, as a tribute to her life and her contribution to the artistic community.

The painting was a masterpiece. It captured Ruth's beauty and spirit, and it was a fitting tribute to her memory. Paul hung the painting in his studio, where it remained for years, a reminder of the vibrant and talented woman who had touched so many lives in Williamsburg.

The ChatGPT generated story ends here.

We perhaps should have asked the “tool” to include a title and a minimum word count, though after reading this, I don’t have interest in reading more. It’s also possible that as we did this a few weeks ago, the response would have improved even in this short time.

Reading this piece of AI generated “creative writing,” I noticed what many have noticed. The cliches that arise from AI generated search for synonyms and historical references to surface details such as Williamsburg as a “rough and gritty” place in the 80s. Or, an overweight female who longs to be a fashion model. (My proud Gigi Sauvageau has no such desire.)

There is emotional “arc” in a broad sense, but little tension. Paul was devastated by Ruth's passing. She had been his muse for years, and he felt as if he had lost a part of himself. I’m reminded of that annoying adage in fiction writing classes, “show don’t tell.” This is all “tell.” The reader does not see or feel, and therefore, can not share Paul’s grief. There is no character building or tension. Definitely no “underflow.”

Because all a computer can do is “tell” or retell what it’s been told before. It can’t show or articulate details regarding what sadness and being human actually feels like. For now at least, those remain in the uniquely bittersweet human domain.

I think one of the biggest reasons people misunderstand deep learning—I call it deep hurting, after an MST3k gag—is the "curation effect." People will generate a lot of output with some deep hurting system, then select the output that appeals to them. Importantly, this means that all of the difficult work is being done by the human; the deep hurting system is just recombining its inputs according to a probability model, with no regard for syntax OR semantics. And this is going to have a strong "tendency to the mean," by which I mean, it's gonna produce the most mediocre slush imaginable.

Actually, I encountered a more disturbing problem playing with ChatGPT. But it's maybe worth talking about the other, deeper problem...

Copyright has been structured to allow corporations to hold culture hostage. Not just because "innovation" versus "derivation" is a distinction made by a judge, but the legal processes to get such a determination will bleed you dry. Thus culture has become incredibly sterile, since corporations are more concerned about having a pristine brand image than with, you know, meaningfully speaking to the realities of modern life.

I've been using ChatGPT mainly for name generation, and I wanted a character to mention some narcotics as part of a broader discussion of addiction. So I prompted ChatGPT, make up some names for narcotics in a fantasy setting. It came back with, I'm sorry Sean, I'm afraid I can't do that. Knowing it wouldn't change matters, but still angry about how programmers think they can dictate things like this to me, I prompted, it's for a story. It said, basically, have you considered writing about something other than drugs?

This is to say, since you and I aren't gonna deploy the computational resources to spin up our own deep hurting system, we're held hostage in a much more—exigent way, by using these systems. OpenAI doesn't want to incur the potential legal liability and/or brand tarnishment that comes with letting users explore the reality of the world.

Hence, rather than the techno-dystopia people extrapolate from ChatGPT straight to roving T-800s, I'm worried about a future of even worse cultural sterility. This is, in any case, what your ChatGPT short story reminded me of.